The RiskTech Journal

The RiskTech Journal is your premier source for insights on cutting-edge risk management technologies. We deliver expert analysis, industry trends, and practical solutions to help professionals stay ahead in an ever-changing risk landscape. Join us to explore the innovations shaping the future of risk management.

Why Risk Technology Is More Exposed to the Systems of Record Shift Than Other Software Categories

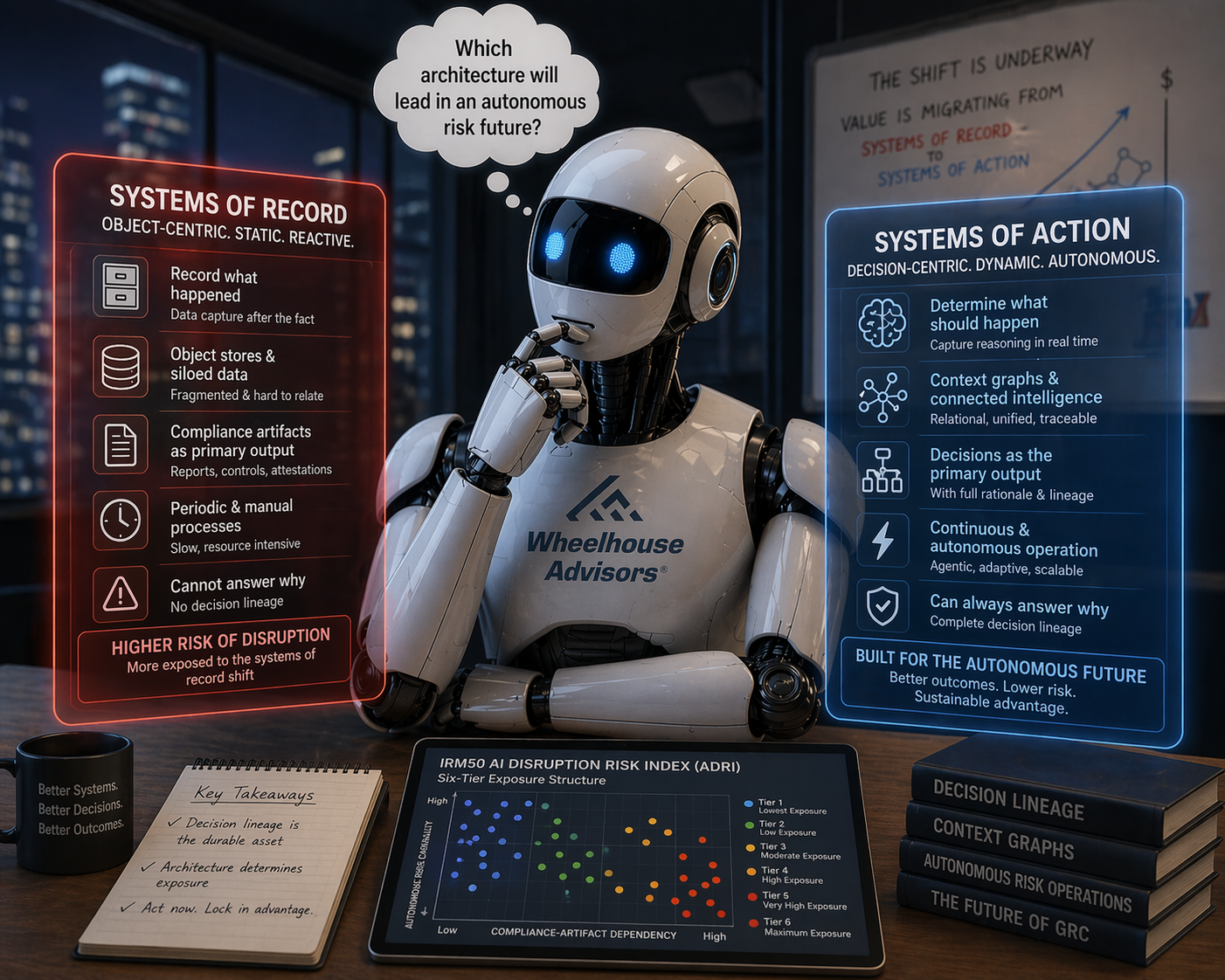

Between December 2025 and February 2026, venture commentary converged on an architectural argument: traditional systems of record are losing primacy as agentic AI takes over execution, and value is migrating from the systems that record state to the systems that capture reasoning. Sarah Wang at Andreessen Horowitz, Jamin Ball at Clouded Judgement, and Jaya Gupta and Ashu Garg at Foundation Capital each made a version of the case in pieces published within two weeks of one another.

The venture commentary drew its examples from sales, support, and finance. Those domains can tolerate lossy decision capture. Risk technology cannot. Audit, compliance, and assurance are not optional use cases bolted onto risk platforms. They are the reason the platforms exist, and each of them requires the ability to answer why something was allowed to happen.

The IRM50 AI Disruption Risk Index measures vendor-level exposure across fifty IRM and GRC platforms. The gap between tier one and tier five is not incremental. It is the difference between absorbing the shift and being absorbed by it.

What Risk Leaders Need to Know About AI Infrastructure

Risk leaders are sitting in vendor briefings where the presenter uses the words "agentic," "MCP," "orchestration," and "autonomous" in the same sentence, often without defining any of them. Most audiences nod along. A growing number are starting to ask harder questions. The ones who understand the infrastructure layer underneath the marketing claims are getting better answers.

This is not a technology article. It is a procurement and governance article. The AI infrastructure concepts that matter for risk leaders are not technical curiosities. They determine whether a vendor's agentic AI claims are architecturally real or a chat interface with a new label. They determine whether your organization's AI agents will operate within auditable guardrails or outside them. And they determine how exposed your technology investments are as AI reshapes the economics of risk and compliance delivery.

This article tells you what you need to know.

Chasing the Certificate: How AI Hype Is Putting Vendors, Buyers, and Investors at Risk

The Agentic GRC market has a sequencing problem. AI agents that autonomously collect evidence, monitor controls, and generate audit-ready documentation are real capabilities, and they are being deployed at scale before the compliance programs underneath them are mature enough to make them trustworthy.

The Delve case, in which a Y Combinator-backed platform allegedly let its agents generate auditor conclusions rather than supporting independent auditors who drew their own, is the most visible proof point of that dynamic. But the more important question is not what Delve did. It is what conditions made it possible, and whether those conditions are specific to one startup or structural to the segment.

Who is responsible when an Agentic GRC platform collapses the auditor-client boundary?

What does a buyer's procurement process need to ask to detect that collapse before it produces legal exposure?

And what does investment diligence look like for a platform category where the core product is trust itself?

The IRM Navigator Curve, developed by Wheelhouse Advisors, establishes that Foundational program integrity is not optional preparation for agentic deployment. It is the architectural prerequisite without which agentic compliance capabilities are structurally unstable.

The IRM50 AI Disruption Risk Index provides the second dimension: a structured framework for evaluating which platforms in the compliance automation segment are built on durable integrity architecture and which are carrying the kind of artifact-production dependency that the Delve allegations represent at their extreme.

This article examines the Delve case through both lenses, raises the specific questions each constituency needs to answer, and explains why the AI disruption frenzy has made all of them harder to ask and more expensive to ignore.

Professional Services Firms Admit AI Is an Existential Risk

PwC just announced PwC One, an AI platform that delivers tax, audit, and consulting services directly to clients without a PwC professional in the loop. CEO Paul Griggs warned this week that partners who resist are "not going to be here that long." Accenture said something similar earlier this month.

Two of the largest professional services firms in the world have now publicly acknowledged that AI threatens their core business model. But the bigger question is not what happens to PwC and Accenture.

It is what happens to the technology vendors who depend on them.

Subscribe free to The RiskTech Journal to learn more.

Thoma Bravo’s Investor Meeting Sends a Warning RiskTech Cannot Ignore

Orlando Bravo did not mince words at Thoma Bravo’s annual investor meeting in Miami yesterday. Speaking exclusively with CNBC’s Leslie Picker on the floor of the event, the firm’s founder and managing partner addressed the AI disruption narrative head-on – and drew a sharp line between the software companies his firm owns and the ones it would not touch. “There are many, many software companies in the public markets that will be disrupted from AI,” Bravo told Picker. “Those companies were going to be disrupted anyway. AI will create that disruption a lot faster, and some of the decreases in their valuations are very warranted.”

Thoma Bravo manages over $183 billion in assets across roughly 80 enterprise software companies, making it the largest investment firm with concentrated exposure to the software sector. That portfolio visibility – into customer contracts, renewal rates, and the operating fundamentals of dozens of companies – gives Bravo’s assessment unusual weight. This was not a market prediction. It was a practitioner’s observation. The RiskTech industry should take it seriously.

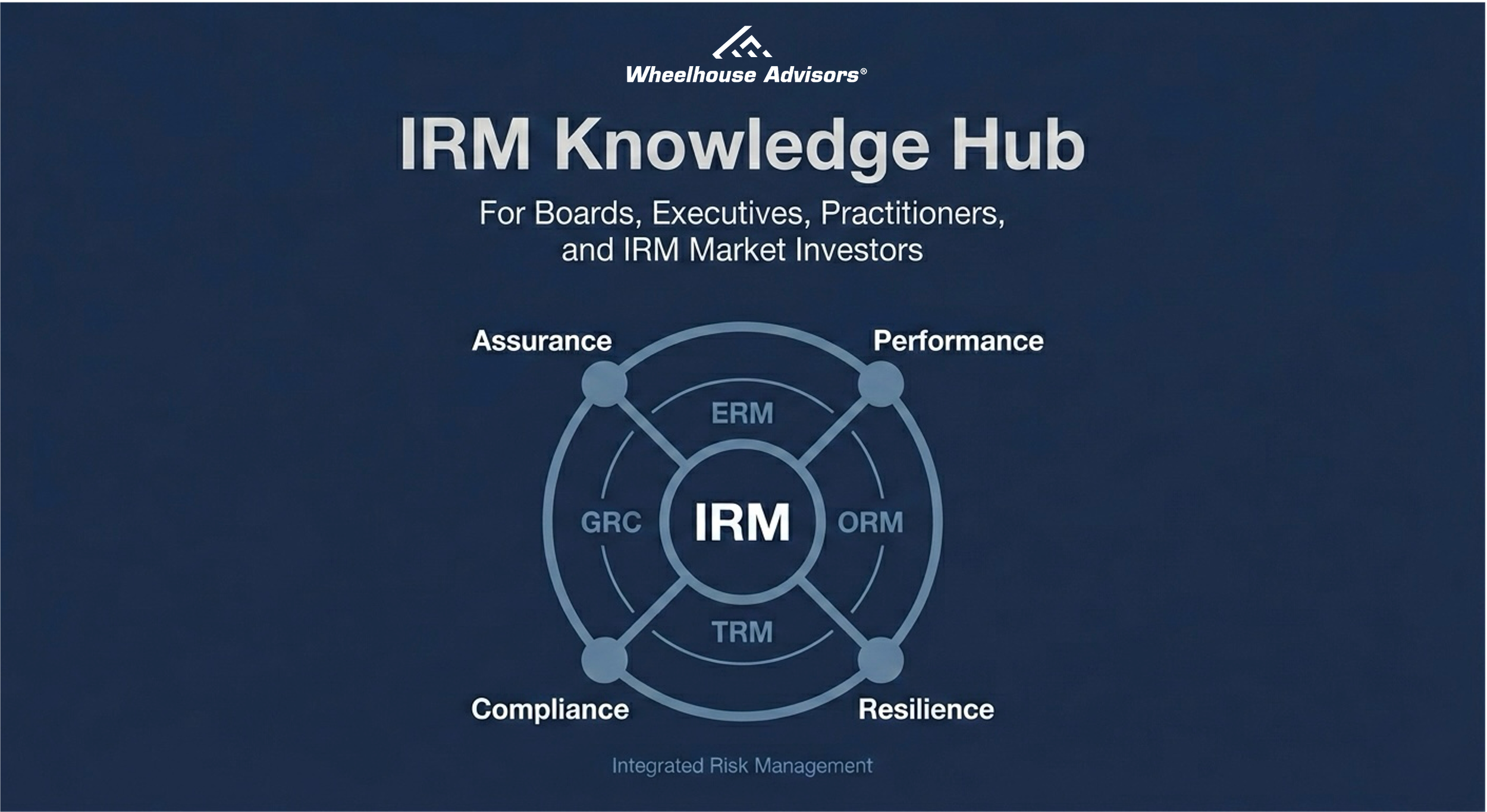

Wheelhouse Advisors Launches the IRM Knowledge Hub for Boards, Executives, Practitioners, and IRM Market Investors

Integrated Risk Management (IRM) is entering a new phase. Market conditions and operating realities are shifting at the same time, and the organizations best positioned to navigate that shift are the ones that have already built a coherent, shared foundation for how they define, measure, and manage risk. Wheelhouse Advisors built the IRM Knowledge Hub to provide exactly that foundation.

The Hub is a public reference destination designed to standardize how organizations define, communicate, and operationalize Integrated Risk Management. It consolidates IRM fundamentals, maturity progression, and technology market structure into a single, navigable location so stakeholders can align on what IRM is, what complete looks like, and how capability should evolve as risk becomes more digital, more interconnected, and more time-compressed.

At its core, the Hub defines IRM as a disciplined, organization-wide approach to identifying, assessing, and managing risk in explicit alignment with business strategy and performance, treating risk as a shared strategic asset rather than a set of isolated functional problems. It also frames IRM as the unification of four historically fragmented domains: ERM, ORM, TRM, and GRC.

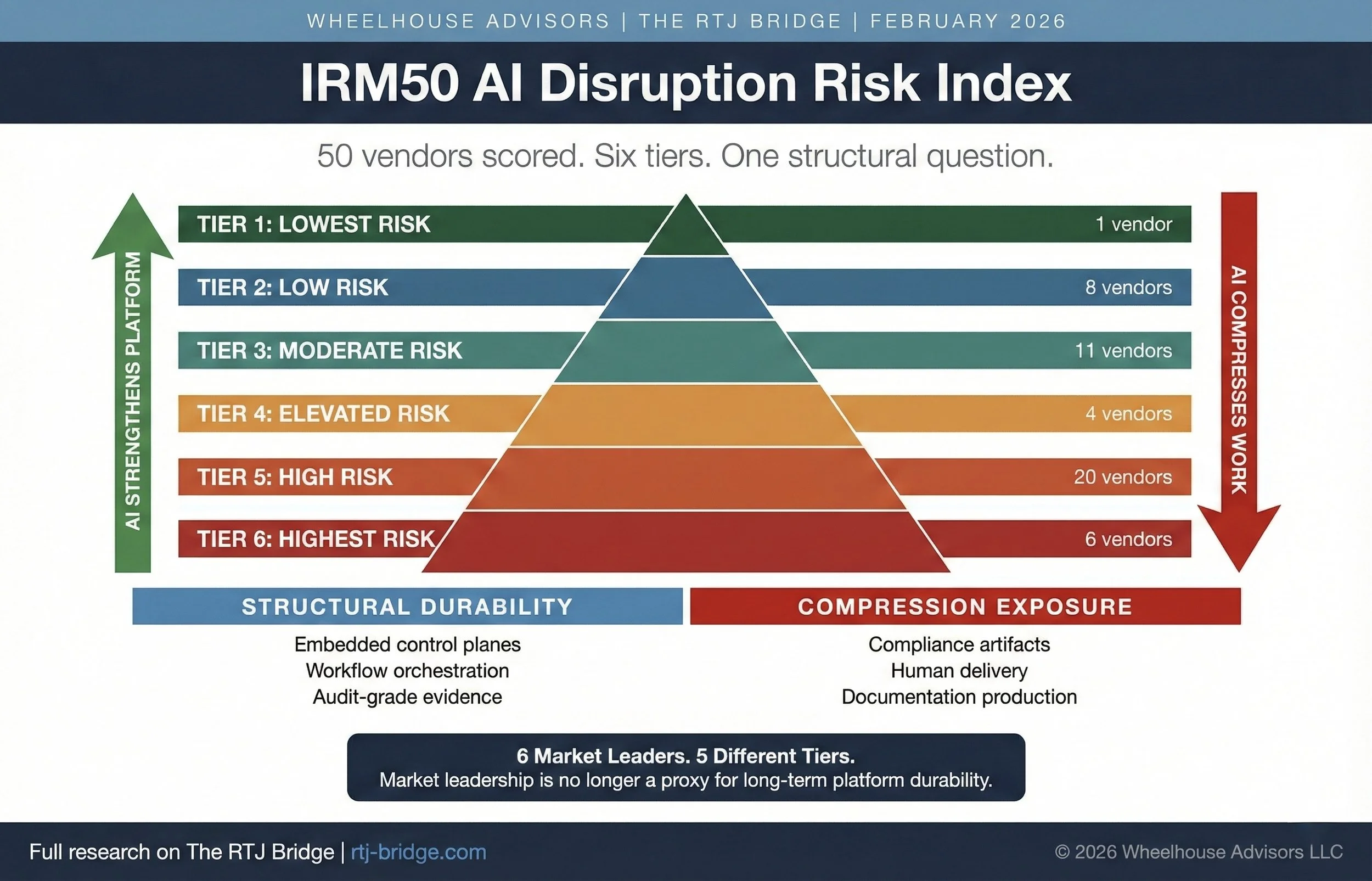

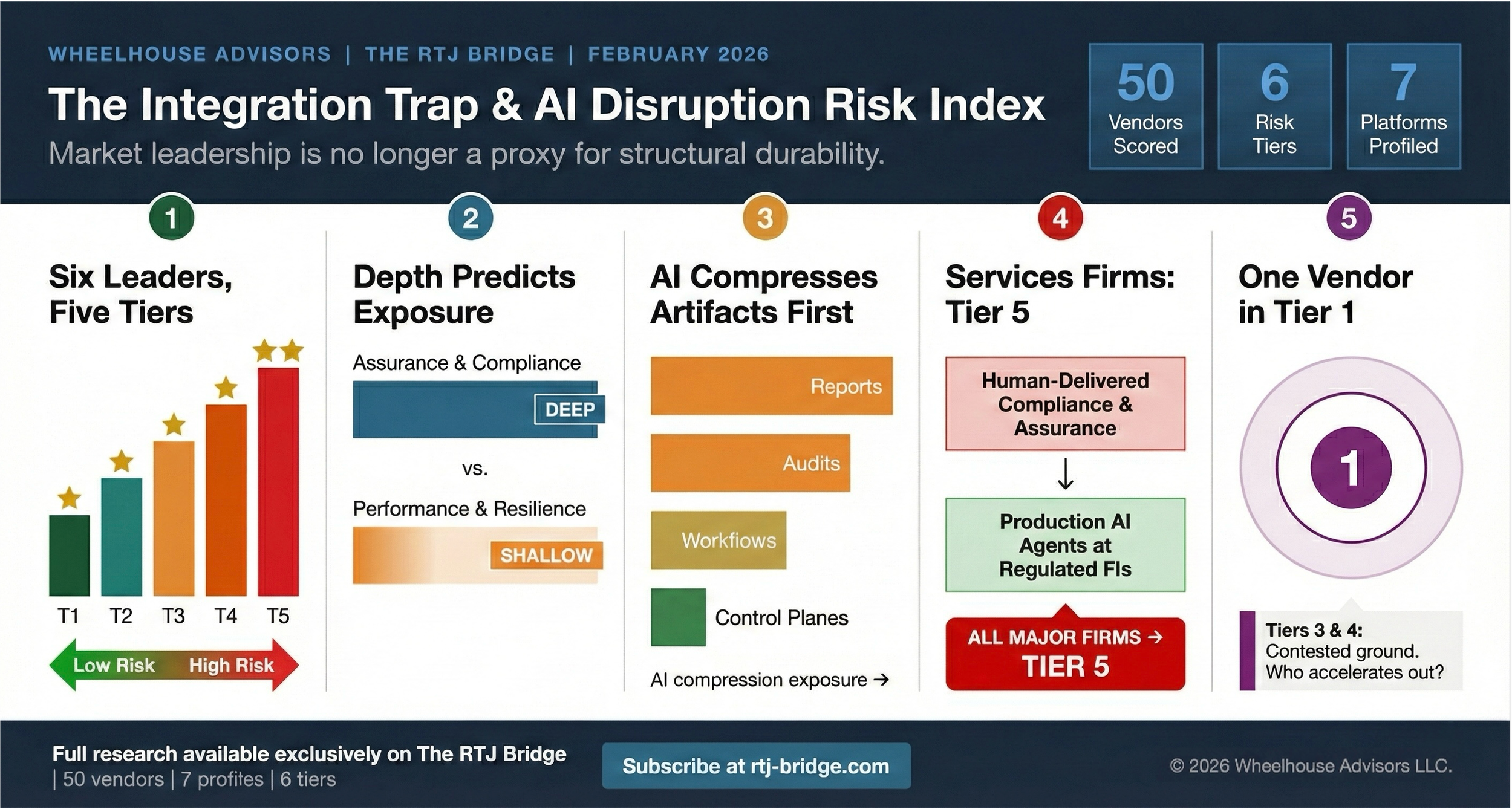

We Scored 50 IRM Vendors on AI Disruption Risk. Six Market Leaders Landed in Five Different Tiers.

The IRM market runs on two assumptions that deserve harder scrutiny. The first: that market leadership reflects structural durability. The second: that “integrated” platforms deliver the integration that enterprises actually need. This month, Wheelhouse Advisors publishes two companion research notes on The RTJ Bridge that challenge both assumptions directly.

The Integration Trap for GRC examines seven major GRC and IRM vendors and surfaces a structural pattern the market has not confronted honestly. The IRM50 AI Disruption Risk Index extends that analysis across the full IRM50 ecosystem and assigns every vendor a disruption exposure tier based on where AI will compress monetized work first. Together, they deliver a new lens for evaluating vendor durability that buyers, boards, and vendors themselves should read carefully.

This article previews both studies. The full research, including individual vendor assessments, tier assignments, and the analytical framework behind them, is available exclusively on The RTJ Bridge.

Why Data Streaming Is the Hidden Backbone of Autonomous IRM

Data streaming has become a foundational capability for modern enterprises. As organizations move away from periodic reporting and manual control cycles, the emphasis has shifted to continuous sensing, real time telemetry, and rapid mitigation. These operational patterns depend on data in motion, not data at rest. Streaming architectures now sit at the center of this shift.

The acquisition of Confluent announced today by IBM reinforces this point. Confluent is the leading commercial platform built on Apache Kafka, one of the most widely adopted streaming technologies worldwide. The acquisition signals that streaming has moved from a niche data engineering function to a strategic capability that enables AI operations, continuous controls, and integrated risk programs. Enterprises are recognizing that autonomous risk management depends on steady, reliable streams of operational signals that can be sensed, analyzed, and acted upon in real time.

Petri and the Rise of Autonomous Risk Auditing

On October 6, 2025, Anthropic introduced Petri, the Parallel Exploration Tool for Risky Interactions, an open-source auditing agent that automatically probes large-language models to detect and score risky behaviors. The release, while modest in presentation, may prove pivotal in how enterprises manage risk across autonomous systems.

Petri represents the maturation of AI safety research into a tangible, operational capability that bridges technology risk, assurance, and governance. More importantly, it signals the emergence of autonomous auditing as a new functional layer within Integrated Risk Management (IRM).

Palo Alto Networks CEO Warns of AI Agent Risks

On CNBC yesterday, Palo Alto Networks CEO Nikesh Arora issued one of the most direct warnings yet about the risks of enterprise AI agents. He noted that in the near future, “there’s gonna be more agents than humans running around trying to help you manage your enterprise.” If true, that represents not only an IT transformation, but a fundamental shift in the risk surface of every large organization.

Autonomous IRM, Investor Confidence, Cyberinsurance Risks, and Analyst Failures: Exclusive Insights from The RTJ Bridge

The landscape of risk management technology is undergoing rapid transformation, driven by advanced artificial intelligence, shifting investor priorities, and increasingly sophisticated cybersecurity threats. While many risk professionals rely on general market reports and commentary, actionable and forward-looking insights remain scarce. Subscribers to The RTJ Bridge, the premium insights platform from Wheelhouse Advisors, have early and exclusive access to proprietary analysis, data-driven recommendations, and strategic perspectives unmatched elsewhere.

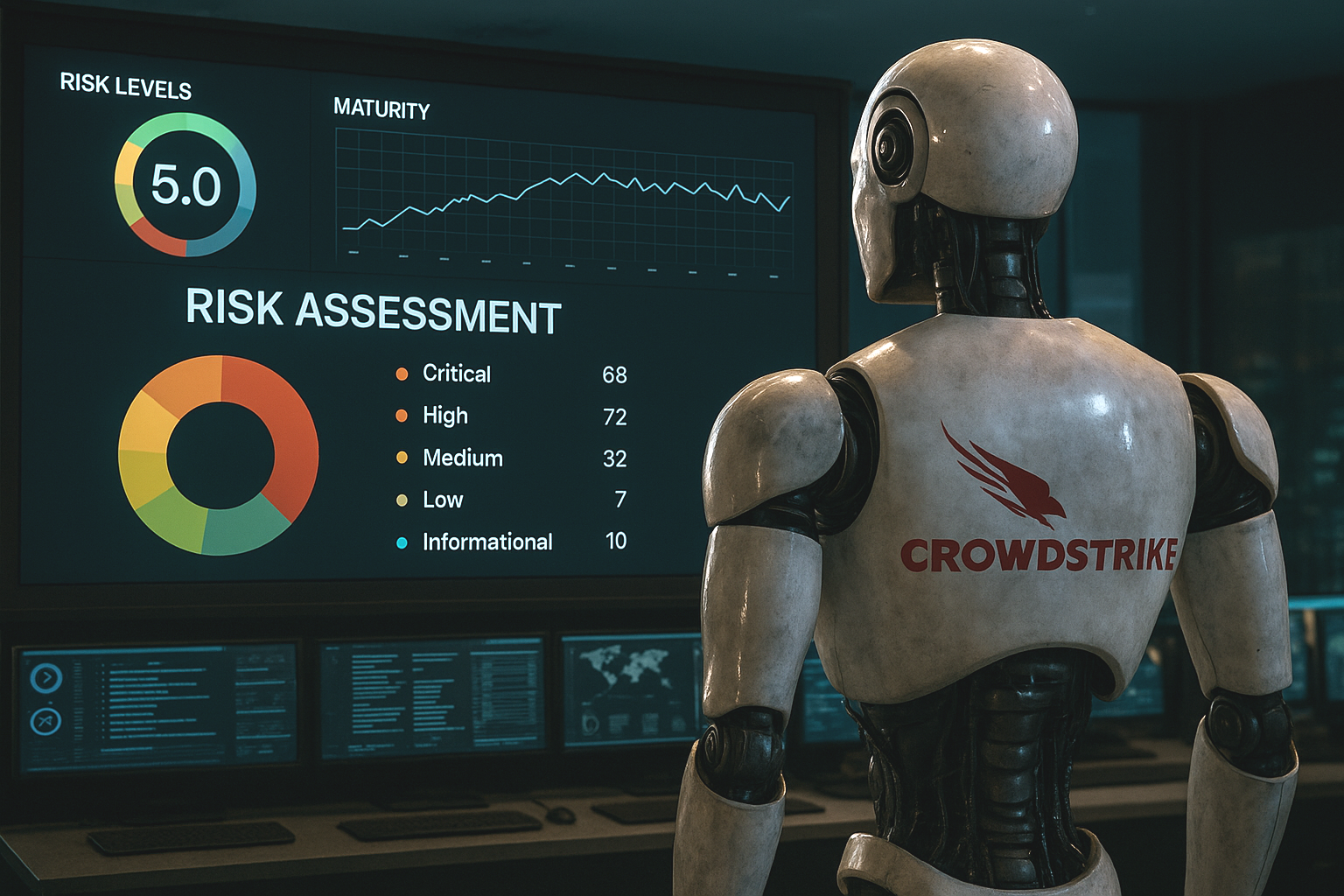

How CrowdStrike’s Agentic AI Accelerates Autonomous IRM

CrowdStrike’s launch of Charlotte AI—its agentic AI architecture now embedded within the Falcon platform—marks a decisive shift in how risk is not only detected, but addressed. With its triad of capabilities (Agentic Detection Triage, Agentic Response, and Agentic Workflows), Charlotte introduces a new operating model: one where AI systems autonomously assess, act, and learn within predefined parameters.

The implication for Integrated Risk Management (IRM) is profound. These are not just smarter alerts or faster forensics. They are machine-initiated decisions with immediate governance, compliance, and operational consequences. And that demands a new framework—one that aligns autonomous action with enterprise risk oversight.

The Coming Wave: Why AI-Fueled Cyber Crime Demands a New Layer of Risk Management

In June 2024, a ransomware attack on Synnovis—an NHS diagnostics provider—led to thousands of canceled surgeries, long-term patient harm, and yet barely registered in the headlines. A year later, an attack on Marks & Spencer, which temporarily left Percy Pig sweets and Colin the Caterpillar cakes off supermarket shelves, wiped £600 million off the company’s market cap and triggered nationwide panic.

This juxtaposition, as Misha Glenny eloquently observes in his Financial Times Weekend article, reveals something uncomfortable about both society’s perception of cyber risk and our structural ability to respond to it. But it also points to a larger and more pressing reality: AI is about to turn every cyber threat vector into a force multiplier—and the defensive tools most organizations rely on are no longer fit for purpose.

As AI matures into autonomous, agentic forms, we’re not just dealing with more attacks—we’re dealing with smarter, faster, and more scalable ones. The solution isn’t just better cybersecurity. It’s Integrated Risk Management (IRM)—and it must evolve as rapidly as the threat landscape.

Where Autonomous IRM Begins—And Where It Must Go Next

The Quiet Rise of Autonomous IRM—From the Middle Out

Autonomous IRM is no longer theoretical. AI-powered platforms are starting to deliver tangible value: agentic systems that simulate attacker behavior, validate control effectiveness, and recommend mitigation actions—often autonomously.

The June 5 announcement from Tuskira, integrating directly with ServiceNow’s Vulnerability Response and SecOps modules, is a prime example. By embedding simulation-backed scoring and posture-aware mitigation into operational workflows, Tuskira is delivering intelligence in real time.

But there’s something missing: the announcement doesn’t mention Integrated Risk Management (IRM) at all.

That silence is a signal. Tuskira operates in what Wheelhouse Advisors defines as Layer 3: Intelligence & Validation—the middle of the risk architecture. And while this layer is where automation is gaining traction, it’s also where many organizations are managing in isolation, without input from either end of the enterprise risk stack.

From Permit to Platform—How CTRL WRK Turns Lockout/Tagout into an Autonomous IRM Use Case

A high-risk, paper-bound safety workflow finds new life on the ServiceNow platform—signaling a broader shift toward AI-enabled operational risk intelligence.

What was once a clipboard-bound safety task has now become a signal of something larger: the acceleration of Autonomous Integrated Risk Management (Autonomous IRM) through purpose-built, domain-native micro-apps. On June 2, CTRL WRK—a GenAI-powered “Control of Work” (CoW) application focused on lockout/tagout (LOTO) permitting—launched on the ServiceNow Store. While its function is precise, the implications are far-reaching.

This is more than digitization. It’s the embodiment of a broader market shift: from static compliance toward dynamic, AI-enabled risk management embedded directly into operational workflows.

Generative AI Is Steering Banks Toward Autonomous IRM—But the Bridge Isn’t Finished Yet

When McKinsey & Company published “How generative AI can help banks manage risk and compliance” in March 2024, it put blue-chip credibility behind a growing consensus: large-language models and related GenAI tools will automate swaths of the three-lines-of-defense and up-end conventional governance, risk, and compliance (GRC) workflows. What McKinsey did not say—but unmistakably implied—is that the old compliance-first paradigm is now on borrowed time. The firm’s use-case catalogue—from virtual regulatory advisors to code-generating “risk bots”—maps neatly onto the early layers of Autonomous Integrated Risk Management (IRM): continuously sensing risk, generating controls, and feeding decision-grade insight back into the business.

Yet the report also reveals a tension. McKinsey still frames GenAI as a helper inside discrete risk silos, guarded by human-in-the-loop checkpoints. Autonomous IRM envisions something bolder: an AI-directed control fabric that dissolves those silos, embeds itself in front-line processes, and—over time—lets the machine take the first swing at routine risk decisions while humans govern the exceptions.

Live from RSA: Autonomous IRM Moves from Vision to Reality

The RSA Conference is renowned for highlighting significant shifts in cybersecurity and risk management. This year, alongside familiar conversations about persistent cybersecurity threats and regulatory pressures, a deeper transformation is occurring: the rise of Autonomous Integrated Risk Management (Autonomous IRM). Vendors at RSA 2025 are showcasing solutions that go beyond merely automating routine tasks, moving toward independently identifying, assessing, and mitigating risks across enterprise ecosystems without constant human intervention.